這篇文章 什麼是邊緣人工智慧和應用 最早出現於 AEWIN。

]]>

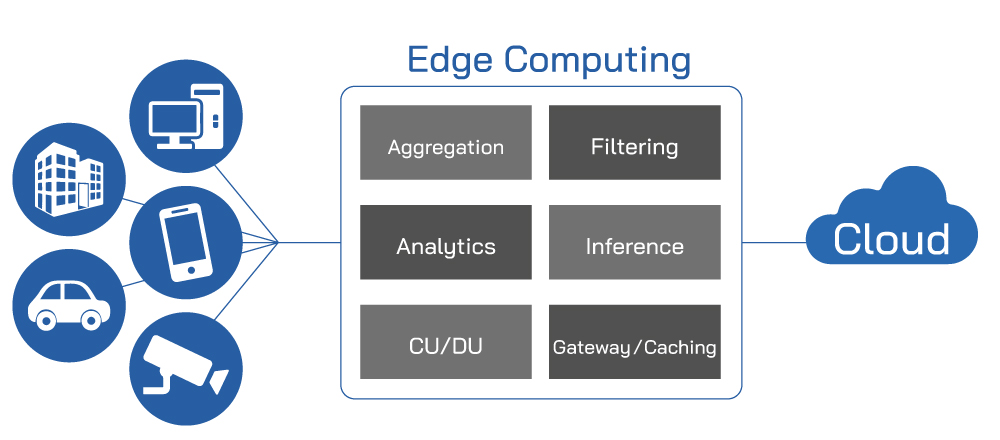

There are many definitions of the Edge, but in general Edge can be defined as on-premises systems or forwardly deployed servers at edge cloud to service a building, neighborhood, or city block. The proximity of these servers to the users alleviates the network traffic and latencies required to traverse to the datacenter.

More:The solutions about Edge AI

Edge AI will continue to be the trend as the next logical step of digital transformation of businesses, as more office tasks are augmented or replaced by digital workflows. Smart applications can help digitize documents or provide instant responses to customer queries with AI assisted chatbots. These smart applications are just the tip of the iceberg in what AI can do to improve organizational efficiency and providing additional data for analytics like never before.

Accelerated AI computing also have applications for improving city life. Smart city solutions can give city planners valuable and precise data for vehicle traffic, foot traffic, hot zones, and many more metrics. These can help city planners formulate future infrastructure upgrades, as well as planning for transportation, traffic, future city expansions and more. It also has uses in city operations.

Live video feeds are becoming the norm in many cities. These cameras, augmented with a visual inference engine can be used for traffic regulation enforcement, to reduce manpower required for minor traffic violations, freeing up valuable human resources for exceptional cases or active criminal activities.

We’re at the exploration stage of AI evolution. We’re experimenting with how AI can improve every facet of our lives. To host these new cutting-edge solutions, the hardware needs to be reliable and can keep up with the demands of high-powered GPU and other application specific accelerators. AEWIN systems are Edge ready, being compact and some have higher temperature design requirements by default. The front access design allows GPUs access to coolest air, helping to keep the GPU at peak performance no matter where it is deployed.

Furthermore, our workload optimization team is good at fine tuning firmware for highest performance in application specific benchmarks based on customer configuration, and this is appreciated by ISVs who do not have volume to drive big brand servers for customizations. AEWIN’s ever-expanding Edge AI product portfolio allows reliable and rapid development of innovative smart applications that can accelerate your business.

Read more:

Aewin have the right solutions for your Edge AI computing needs.

Aewin Edge AI accelerator support guide.

這篇文章 什麼是邊緣人工智慧和應用 最早出現於 AEWIN。

]]>這篇文章 其陽發表最新邊緣人工智慧設備 BIS-3101 最早出現於 AEWIN。

]]>

AEWIN is proud to announce the latest addition to our edge AI focused portfolio, the BIS-3101. This diminutive system features Intel Embedded 9th Gen Core Processors for long term reliability, paired with your choice of Geforce RTX-3080 GPU or the data-center focused NVIDIA Tesla T4. BIS-3101 is in the Workstation form factor that allow deployment in edge application where server room or prepared location is not possible or available, and offering high-performance edge AI inferencing.

Modern business have a growing reliance on smart applications that requires the power of a GPU for the inference workloads. The local availability of GPU compute power de-emphasize the internet requirement, and allow inference workload to work for your business even when internet connection is unreliable, or inadvisable due to processing of sensitive data.

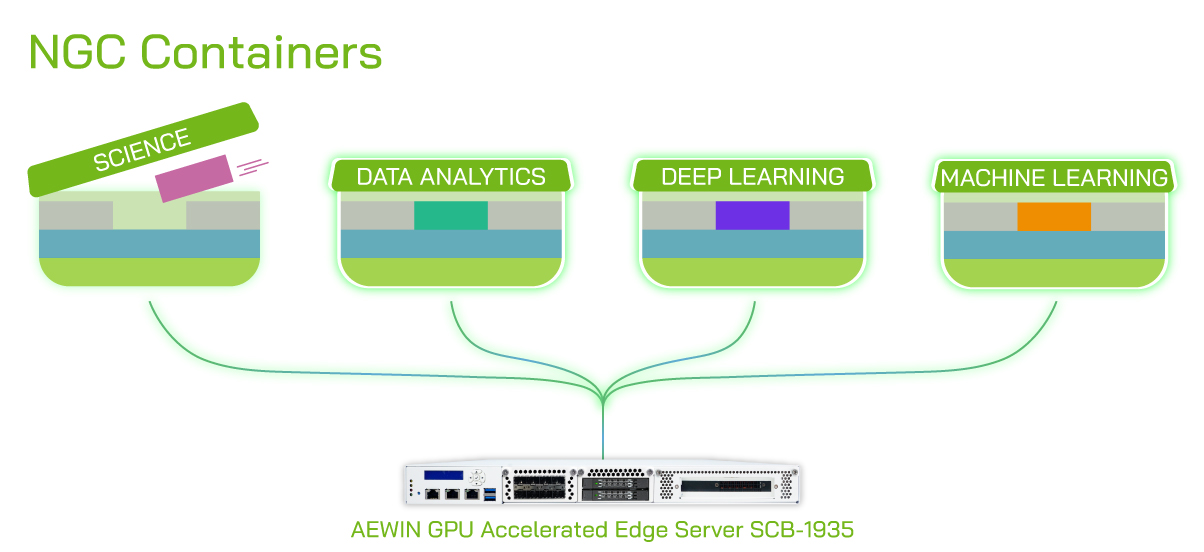

NVIDIA NGC tool kit can expedite the process of developing applications that can leverage the power of GPU through the familiar CUDA toolkit and deployed through Kubernetes and containers. This allows fast concept to program to deployment, to deliver business services as quickly as possible. Through the usage of Kubernetes container management system, large scale deployment across the globe is possible and done simply through Kubernetes commands.

BIS-3101 is a strategic device that is a welcome addition to any business that wish to enlist the services of an Edge AI appliance. Able to support the familiar x86 based software along with NVIDIA’s powerful CUDA toolkit, this enable rapid development of innovative smart applications that can accelerate your business.

Read more:

-What AEWIN customers are doing with our servers?

-AEWIN have the right solutions for your Edge AI computing needs.

-Mobile Edge AI /Multi-access Edge Computing

這篇文章 其陽發表最新邊緣人工智慧設備 BIS-3101 最早出現於 AEWIN。

]]>這篇文章 AEWIN邊緣AI運算的功能 最早出現於 AEWIN。

]]>

Deliver AI on AEWIN Edge Servers

We’re experiencing the dawn of Age of AI, as Nvidia CEO and founder, Jensen Huang details his vision at the recent GTC event. AI continues to be the hot topic in the news and blogosphere. According to Intel research, 75% of enterprise applications will use AI by 2021. This creates a large demand for GPU servers to host these new enterprise focused applications. AEWIN, being a network appliance specialist, has tangible advantages versus traditional datacenter server makers trying to miniaturize their servers to fit in the constraints of edge deployment with the twist of added TDP of GPU accelerators.

Our network focused designs is Edge ready, being compact and have higher temperature design requirements by default. The front access design allows GPUs access to coolest air, helping to keep the GPU at peak performance no matter where it is deployed. Our designs have been refined over generations, compared to datacenter focused manufacturers who are validating their designs for the first time without the trials of scaled deployments. With AEWIN, you’re buying a robust and tested design without the hassle of being the test case for the manufacturer.

As exemplified in our previous blog post (HERE), we’re not satisfied with just changing a few mechanical bits to accommodate GPUs. We are intensely benchmarking through NGC containers and fine tuning firmware to offer the best performance possible. All of our experience working with NVIDIA NGC container platforms are available for your projects. Our goal is enable our customers to quickly deliver AI on AEWIN edge servers. It is the title of this blog post! As a member of AEWIN Edge Servers team, I am hugely excited to finally let the fruits of our labor be known. Please don’t be shy and come talk to us about GPU powered edge servers.

這篇文章 AEWIN邊緣AI運算的功能 最早出現於 AEWIN。

]]>

Video Analytics – Fine Tuning for Big Gains

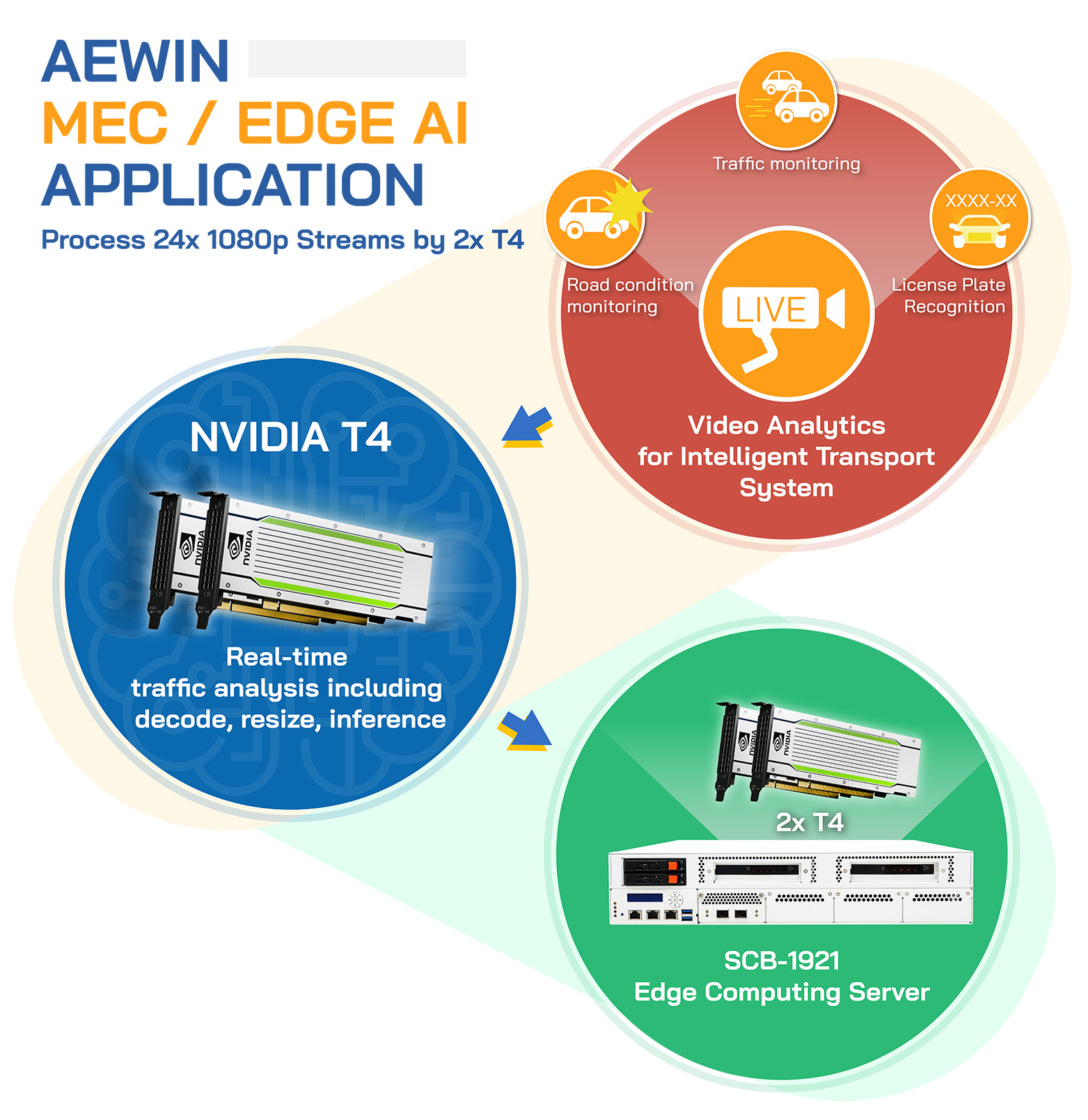

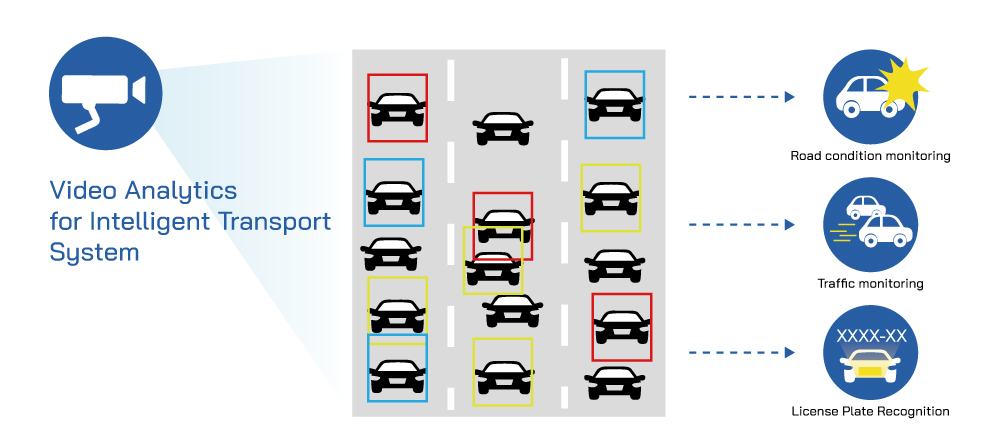

AEWIN has been working hard to provide highest performance out of your hardware investments. We’ve been tasked to provide GPU accelerated MEC edge servers for video analytics application. Specifically for accelerated live traffic monitoring, which utilizes NVIDIA T4 to decode live video feeds and perform AI inferencing to detect traffic patterns by different types of vehicles, collisions, traffic violations, license plates, and other useful metrics.

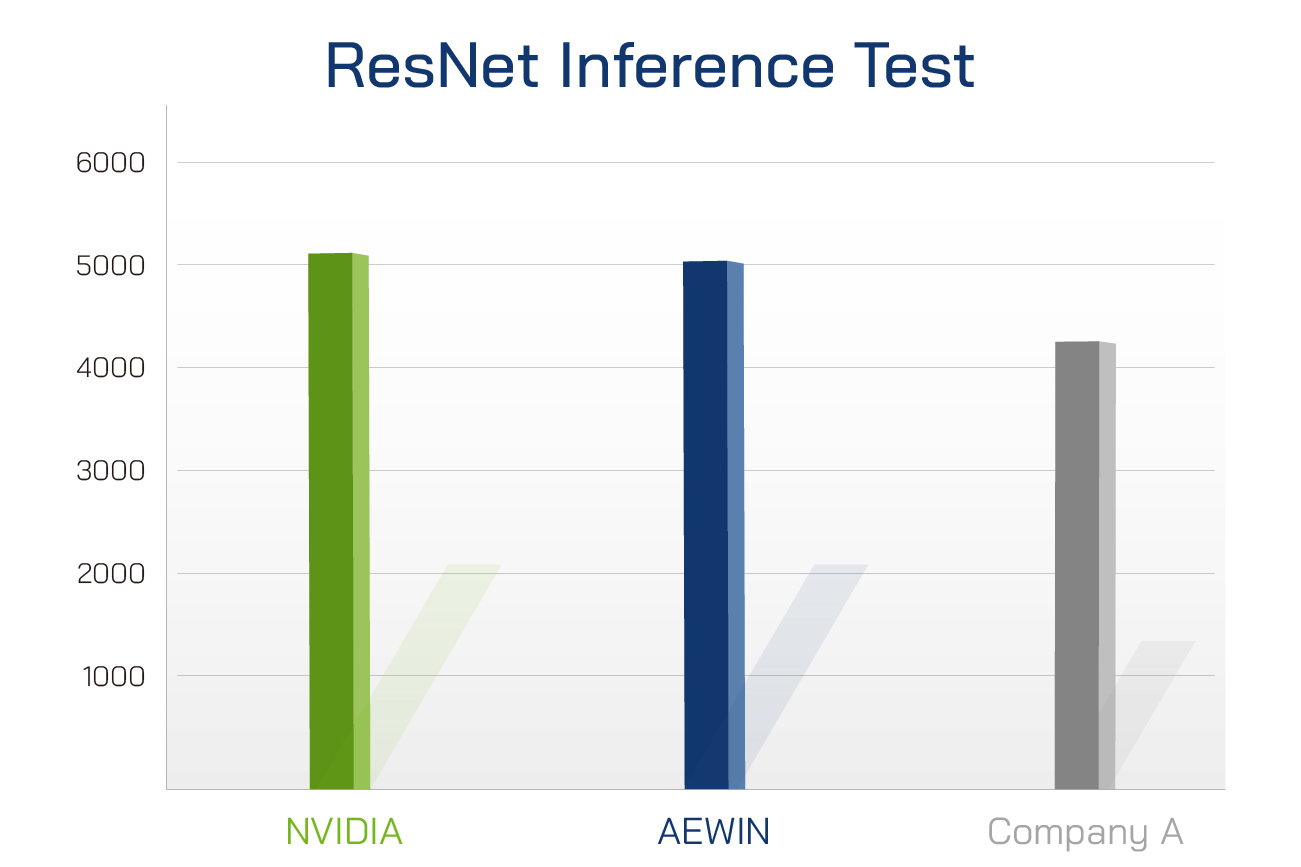

This seems like an easy task for servers to handle. However, not all servers are created equal. Off the shelf servers are typically optimized for various different workloads, making it jack of all trades and master of none. Our MEC servers and engineers have been steadily focused on maximizing GPU acceleration performance. We received customer praise due to our ability to handle 24x full HD live streams compared to their previous system which is only able to process 18 streams utilizing similar setup. Through continued testing and fine tuning, we’ve exceeded the performance of some of the biggest names in the server space and closely matching the benchmark published by NVIDIA.

Table: Resnet-50v1.5 + TensorRT Inference Test

*Test setup: SCB-1921 MEC version + 2x Nvidia T4 vs published numbers by Nvidia and competitors

This is the difference between going with a general purpose server from other manufacturers versus AEWIN. Through personalized collaboration, it enable us to fine tune performance of the servers and GPU for your specific workloads. Our flexible manufacturing, which is located right next to our engineering/headquarters right here in New Taipei City, allows us the flexibility to work with customers from smaller scale projects to large. Our experienced engineers are available and can offer insights and give recommendations for system configs. Make sure to bring your projects to AEWIN before deciding on your next GPU accelerated platform and see how AEWIN can help you get the most performance out of your investment.

SCB-1921

Edge Computing Server

- Support Dual 2nd Gen Intel Xeon Scalable Processors

- Support 2933MHz System Memory

- 4x PCIe x8 for Network Expansion Module, plus 4x PCIe x8 or 2x PCIe x16 for GPU or FPGA

這篇文章 AEWIN搭配NVIDIA T4 GPU加速器加速AI視覺推理 最早出現於 AEWIN。

]]>

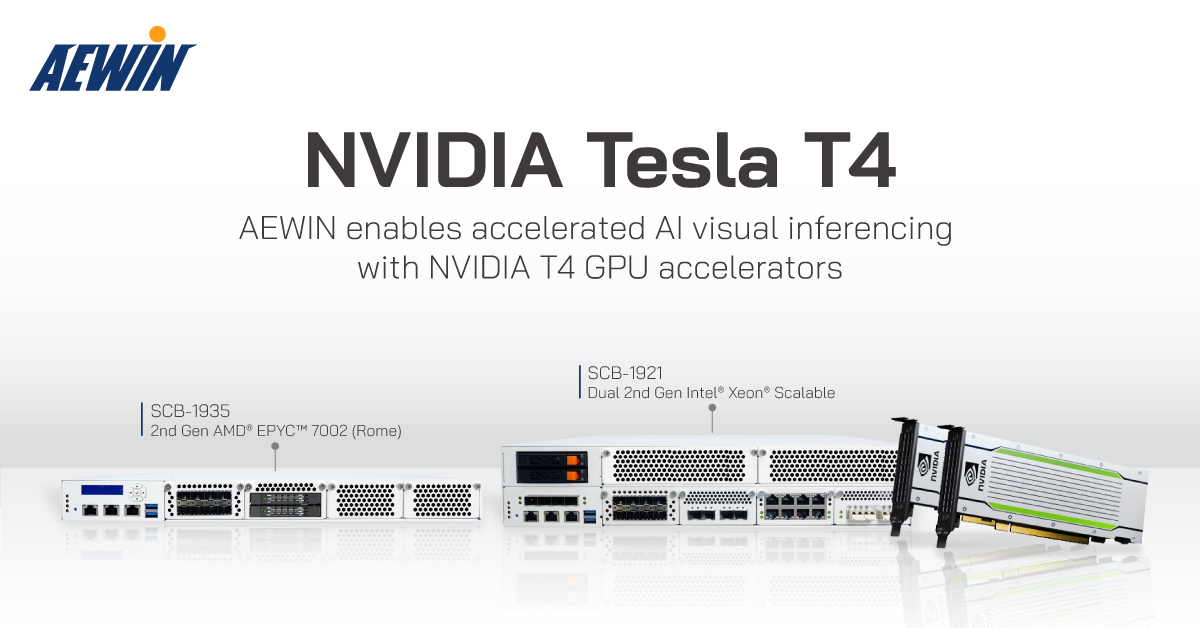

AEWIN enables accelerated AI visual inferencing with NVIDIA T4 GPU accelerators

AEWIN has delivered to partners SCB-1921 and SCB-1935 equipped with the Inference champion: the NVIDIA T4 GPU. These GPU will decode live video streams, inference, and output useful data for advanced analytics of traffic patterns, license plate recognition, traffic conditions and more. These output can be immensely useful for city and traffic planning, as well as enabling the future of traffic management to allow live traffic rerouting around obstacles and accidents to improve overall traffic throughput and reduce further risk of collateral damage. AEWIN is working hard to enable GPU accelerated AI Inferencing to diverse range of servers and MEC. Please come and talk to us about your AI inference needs.

這篇文章 AEWIN搭配NVIDIA T4 GPU加速器加速AI視覺推理 最早出現於 AEWIN。

]]>這篇文章 MEC – solutions and use cases 最早出現於 AEWIN。

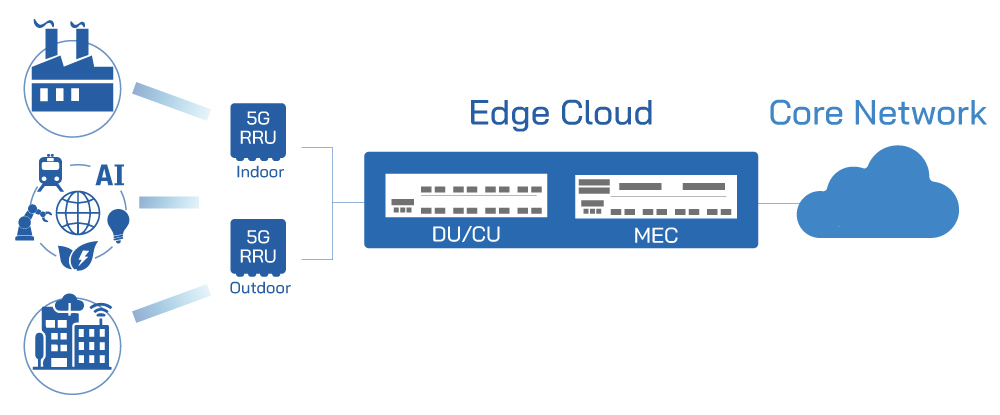

]]>The AIM of MEC is to provide edge services directly on or near cellular network radios. This opens up a whole new world of applications, as well as hardware requirements to match. To allow 3rd party applications running on the Radio Access Network (RAN), there are some infrastructural changes required for the mobile operators. First of which is transformation to allow multi-tenancy on the RAN hardware. Leading the change is utilizing vRAN (virtual RAN), built upon the same hardware abstraction technologies as VNF and other virtualized systems, and running on top of a hypervisor to enable multiple isolated virtual machines. Another area that needed advancement is the management and orchestration (MANO) software to deploy and manage the vRAN and authorized 3rd party VMs to host additional applications. There are changes to the hardware as well, as the industry is moving toward genericized x86 platforms to avoid vendor lock-in. Additional processing power and storage may be required to enable new applications or content caching.

Putting it all together, MEC aims to enable new applications running very close to new virtualization optimized RAN hardware to offer the lowest latency and reducing traffic on the core networks. AEWIN is doing its part to enable deployments in this area. We are working with partners to implement O-RAN compliant MEC servers in the 5G arena, utilizing the RU (Radio Unit) and combined CU (Centralized Unit) / DU (Distributed Unit) architecture suitable for large mobile operators to smaller 5G private networks. The secret sauce is the Xilinx FPGA providing acceleration to ensure the highest bandwidth to 5G users.

A key addition to enhance computation powers of edge servers is with FPGA and GPUs. These accelerators have the potential for many fields, such as high-performance gaming, data/network traffic analytics, compression to save network bandwidth, IoT sensor data analytics, connected cars, and even real time AI inference workloads. AEWIN has been diligently working with partners to enable the latter.

Using AI and GPU accelerators for inferencing has been going on for the past few years, however, the emergence of edge servers made the wide deployment of these real time AI platform possible. AEWIN and partner has been utilizing edge servers to actualize real-time road traffic analytics. Real world application for this technology is traffic management and planning. Although traffic pattern data is already being collected by transportation planners, the use of AI can offer more data and ways to analyze the data. Instead of basic speed and throughput, AI can also track distance traveled, identifying points of slowdowns, dangerous road conditions, high accident segments, and more. The eventual deployment of these will help city and traffic planners to build more efficient and safer public roadway system.

There are a multitude of potential usage for computing power right at the edge, however, there is not a specific killer-app. Some are still trying to make heads or tails on what to do with computing power so close to the edge. To start, instead of thinking about what it can do for your business but start thinking about what sort of benefit you can bring to your customers. what services you offer will have improved customer experience by having lower latency or enabling new applications that can draw in new customers.

Thinking this way can starting down the path of building a sustainable and profitable edge computing deployment and we cannot be stuck on the “if you build it, they will come” mentality. These new services will have to have tangible benefits for your users. A topical example of this is video streaming and CDNs. Fast instant access to high definition 4k videos is a boon to those of us preferring a night out on the couch instead of a night out due to the continuation of the pandemic. Direct access to libraries worth of movies and tv shows without worries of network bandwidth or latency issues is a tangible benefit. Think about what value you can bring to the customer, and what they are willing to pay for, then building a monetization structure around these improved services. Figuring out the right model for requires a lot of thinking and hypotheticals and unique for every company’s product mix and we’re looking forward to talk to you about your applications!

SCB-1830

|

|

SCB-1921

|

|

SCB-1935

|

|

這篇文章 MEC – solutions and use cases 最早出現於 AEWIN。

]]>這篇文章 AEWIN have the right solutions for your Edge AI computing needs. 最早出現於 AEWIN。

]]>AEWIN have the right solutions for your Edge AI computing needs.

As users generate and consume more data via the mobile network, don’t let cloud be a bottleneck in your network. Put the compute power of the cloud near end users to offer a better experience through lower latency and near instant response time. Reduce network traffic with onboard data storage and aggregation, or enable cloud gaming with GPU acceleration. Even AI inferencing with onboard accelerators. Enable new and exciting use cases with AEWIN’s MEC devices to accelerate your network.

Read more:

-AEWIN announce the latest addition to our edge AI focused portfolio

-SCB-1937 update to AMD EPYC 7003 Milan

-Deliver AI on AEWIN Edge Servers

這篇文章 AEWIN have the right solutions for your Edge AI computing needs. 最早出現於 AEWIN。

]]>這篇文章 Mobile Edge AI Computing / Multi-access Edge Computing 最早出現於 AEWIN。

]]>Edge AI cloudification with MEC hardware reduce the network traffic destined for a centralized cloud server location, thereby reducing bandwidth needed. Additional services can be further deployed to help to reduce cost of moving data around, such as utilizing content delivery network (CDN) and local caching, whilst offering a better experience for the users. Lower latencies offered by the closer proximity, enabling a wide range of exciting new applications such as high performance gaming, cloud gaming, real time VR/AR services, data analytics.

Leveraging technologies and experience from the cloud, MEC hardware are optimized for running virtual machines, and designed to fit into limited space with more compact form factor. This abstraction of hardware opens up the possibility of running commercial off the shelf equipment to provide a platform for various additional virtualized network appliances, as well as containing middleware to enable additional services. 5G is one of the hottest topics of 2020, and vRAN running on MEC can be integrated into the 5G ecosystem to allow for increased flexibility and scalability necessary for the wave of new 5G enabled devices that requires constant internet connection.

Read more:

What is Edge AI?How about Edge AI application?

Mobile Edge Computing / Multi-access Edge Computing

這篇文章 Mobile Edge AI Computing / Multi-access Edge Computing 最早出現於 AEWIN。

]]>

7002 (Rome) processors

7002 (Rome) processors