這篇文章 AEWIN 第三代 Intel Xeon 系列產品 | 網通設備 | 邊緣運算伺服器 | 通用伺服器 最早出現於 AEWIN。

]]>

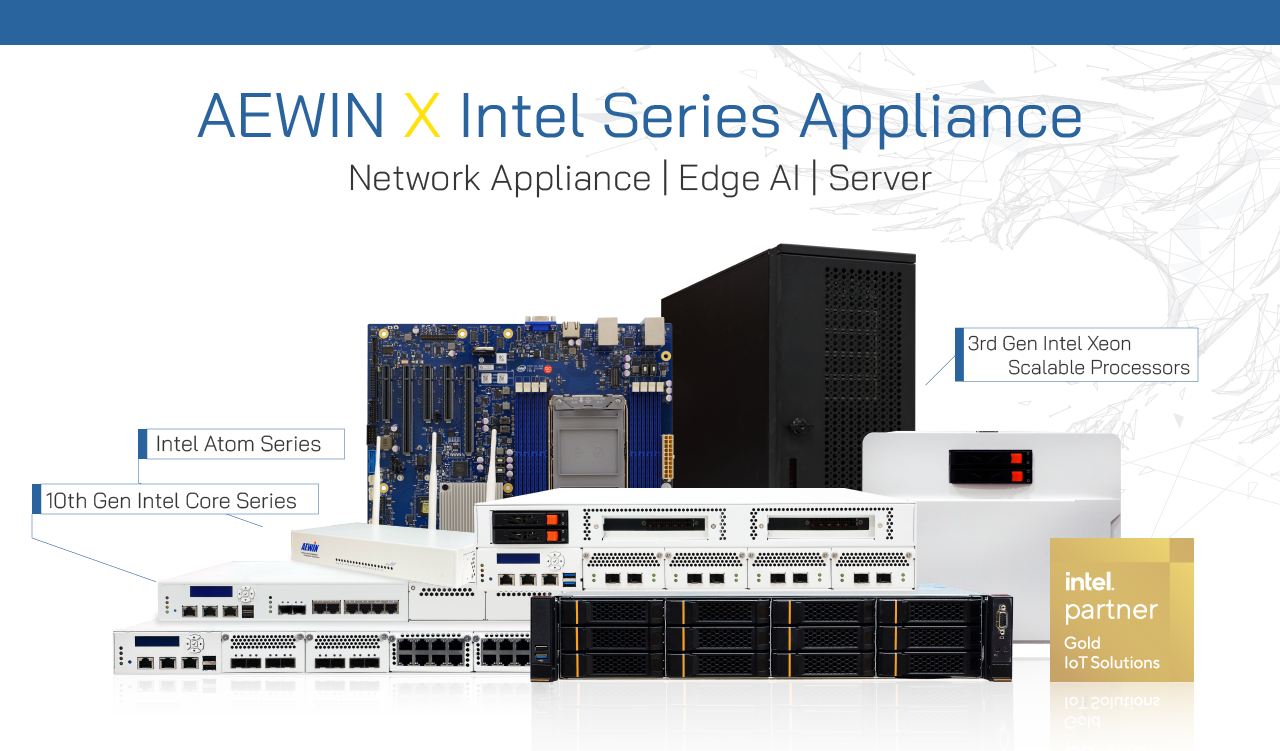

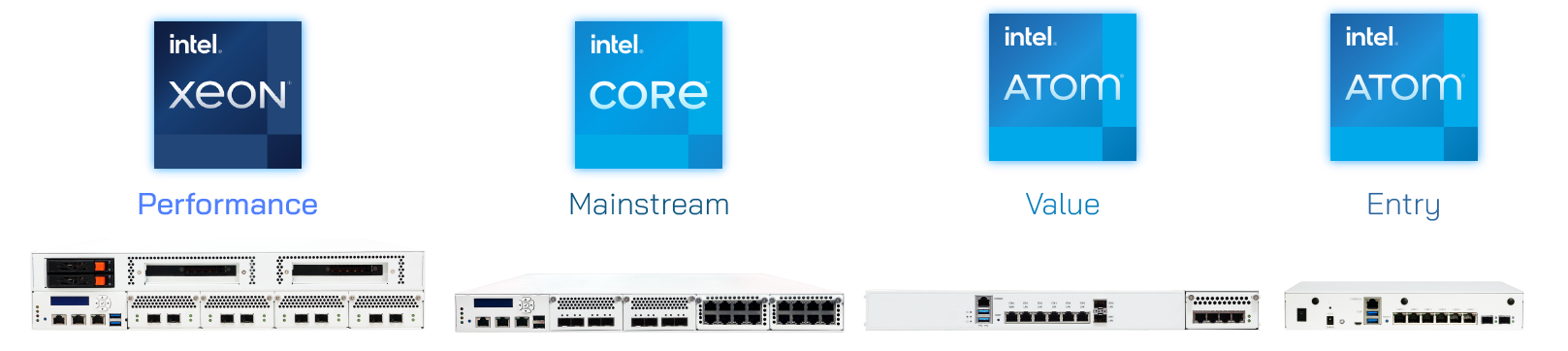

Intel® 3rd generation Xeon Scalable Processors, based on the advanced Ice Lake-SP architecture, has been refined over the previous generation with architectural updates that bring a giant leap in performance. Performance improvements are helped by the increased core count to 40cores per socket, up to 8x channel 3200MHz memory, and Optane Persistent Memory 200 series support (code name Barlow Pass). Platform security has also been enhanced. In addition to SGX already introduced in previous platforms, TME (Total Memory Encryption), Crypto Acceleration, and Intel PFR (Platform Firmware Resilience) technologies are available to help harden the platform against malicious actions. AEWIN has taken these transformative processors and integrated into our latest platforms.

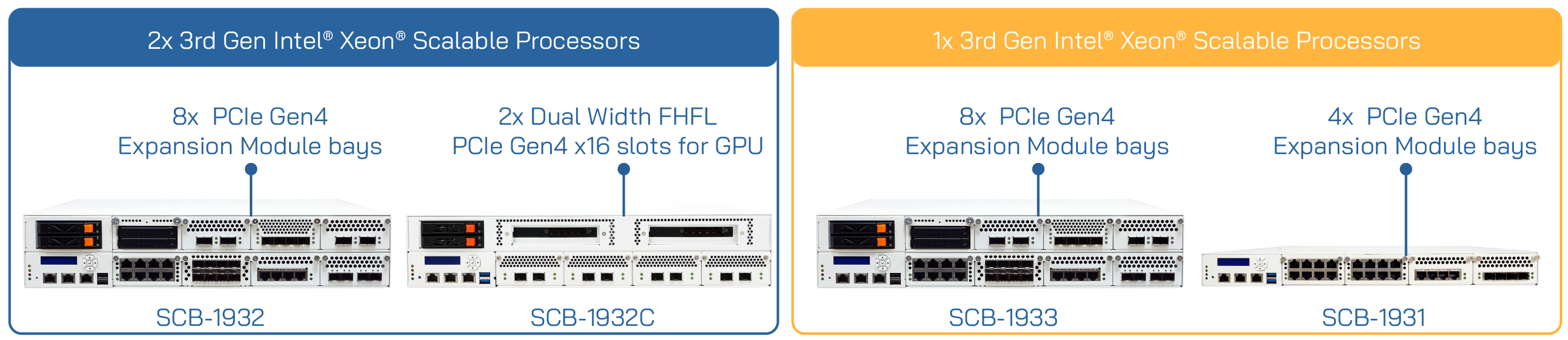

The AEWIN SCB-1932 is a 2U, 2-socket network computing platform and is highly configurable via PCIe accessories and Network Expansion Modules. With up to 8x PCIe Gen 4 Expansion module slots supporting up to 100GbE, it is a versatile system that can satisfy many use cases within a single system. SCB-1932C is a specialized SKU for supporting multiple dual width GPU accelerators. This platform is ideal for NFVI and MEC/vRAN applications, as well as performance Edge AI duties. Single socket variants are also available in 1U SCB-1931 and 2U SCB-1933.

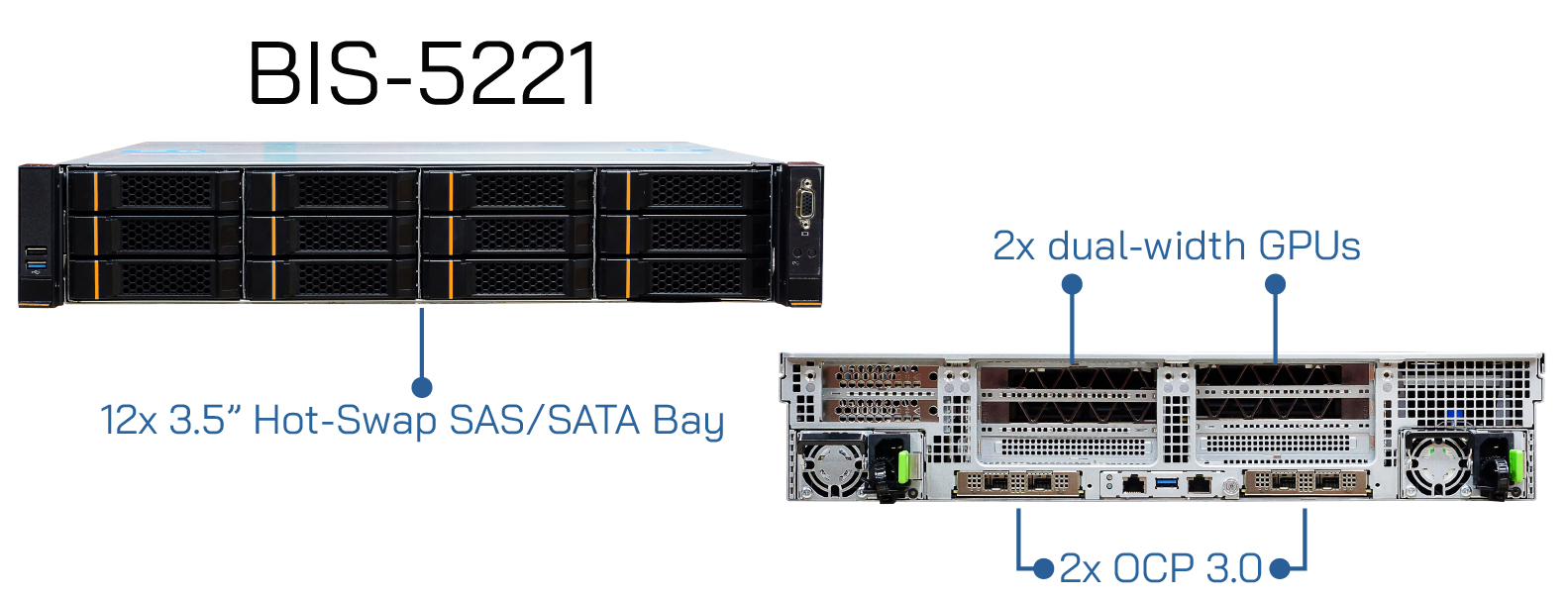

BIS-5221 is a 2U, 2 socket Ice Lake-SP based rackmount server. This is a hyper converged server platform that can empower all your applications. With 6x FHHL and 2x HHHL slots, the SCB-5221 offers great expandability. Total of 2x dual-width GPUs are supported to accelerate AI inferencing and video decoding/analytics workloads. In addition, it also offers 2x OCP3.0 SFF slots, each with PCIe Gen4 x16 bandwidth for the increasingly popular OCP3.0 based network cards. The prestigious amount of PCIe lanes offered by the new Ice Lake-SP is the magic behind this expandability. Vast amount of memory is supported with a total of 32x memory slots.

BIS-5101 is a single socket Ice Lake-SP platform, paired with your choice of single or dual width accelerators with active or passive cooling. BIS-5101 is in the workstation form-factor that allow deployment in edge application where server room or prepared location is not possible or available, and offering high-performance edge AI inferencing. It retains the option of rack-mounting with the optional rail-kit for flexibility. BIS-5101 is a strategic device that is a welcome addition to any business that wish to enlist the services of an Edge AI appliance.

Intel is rapidly displacing other application-specific micro-architectures across the globe by offering familiar x86 based hardware. AEWIN can be your hardware partner with commoditized flexible design, customization, and tuning options to meet your requirements for speeding up the development and deployment of your applications. Have a chat with our friendly sales representatives and discover how AEWIN platforms can take your applications to the next level.

這篇文章 AEWIN 第三代 Intel Xeon 系列產品 | 網通設備 | 邊緣運算伺服器 | 通用伺服器 最早出現於 AEWIN。

]]>這篇文章 AEWIN的Intel 系列產品 | 網通設備 | 邊緣運算伺服器 | 通用伺服器 最早出現於 AEWIN。

]]>

AEWIN Technologies provide smartly designed Intel based platforms for customers of any scale. With 20 years of experience building high performance network forwarding platforms, AEWIN has extensive knowledge in building secure and reliable network solution platforms. From network appliances to edge AI servers, to cloud computing servers, we are experienced with the full range of Intel® processors for wide range of applications. AEWIN has hardware solutions from the most powerful Xeon® SP to the efficient Atom® processors.

AEWIN systems can be part of the ingredients to empower your network transformation. A wealth of security options has evolved with the constantly changing landscape of cybersecurity threats. A network appliance acting as the basis to host a suite of security appliances is an attractive option to augment or replace legacy networking systems. NFVI is the next networking paradigm, and our system is the perfect candidate to host the next generation of virtualized network appliances. We are fully committed to Intel Select Solutions and offer pre-validated and performance optimized solutions covering a wide range of applications.

5G vRAN running on Edge systems can be integrated into the MEC ecosystem to allow for increased flexibility and scalability necessary to provide services at the edge for the wave of new 5G enabled devices that requires constant internet connection. This opens a whole new world of applications, as well as hardware requirements to match. Addition of FPGA and other application accelerators brings the power of the cloud to enhance the computation powers of edge servers. With the new wave of applications powered by artificial intelligence, AEWIN has application optimized SKUs for Edge AI performance. With enhanced thermal design to handle high-powered accelerators, these Edge AI focused servers are perfect for AI training and inferencing applications at the edge inside a MEC stack or inside a datacenter.

這篇文章 AEWIN的Intel 系列產品 | 網通設備 | 邊緣運算伺服器 | 通用伺服器 最早出現於 AEWIN。

]]>這篇文章 AEWIN Edge AI 伺服器加速卡支援表 最早出現於 AEWIN。

]]>

AEWIN Edge computing server have three types of AI application: data center, accelerated edge and workstation. The data center hybrid storage servers focus on high performance and longevity of accelerator. These support the passive data center focused GPU accelerators. The accelerated Edge computing systems include AEWIN front access mainstream and high-performance systems. The 2U systems support most accelerator cards, and the 1U system are better served with lower powered inference focused cards, such as the T4. AEWIN’s workstation class hardware has been designed to accept the widest possible range of accelerators, including consumer focused Geforce RTX series cards.

NVIDIA Accelerators come in many different tiers with the most majority of use cases utilizing the T4 for inferencing. For heavier inferencing applications or int8 or FP16 workloads the A10/A30 class of accelerators are well suited. These cards also have significantly improved training performance over the basic T4, making them the ideal cards for workloads that is inference heavy, but occasionally used for training. For training heavy workloads or heavy double-precision FP64 calculations, NVIDIA’s V100 and A100 accelerators are the best candidates for this application.

The accelerator support guide will help you choose the right hardware to fulfill your specific AI requirement and showing you all the possible configurations for future upgrade path should the computing requirements change.

| AI, HPC & Data Processing | Mainstream Compute | Inference | On Premise & Graphics | |

| A100/V100 | A30/A10 | T4 | Quadro / Geforce | |

| General Purpose/ Storage |

|

|||

| Edge Computing |      |

|||

|

||||

| Workstation |  |

|||

|

||||

這篇文章 AEWIN Edge AI 伺服器加速卡支援表 最早出現於 AEWIN。

]]>這篇文章 OT008 Hybrid Network Cryptography Accelerator Card 最早出現於 AEWIN。

]]>

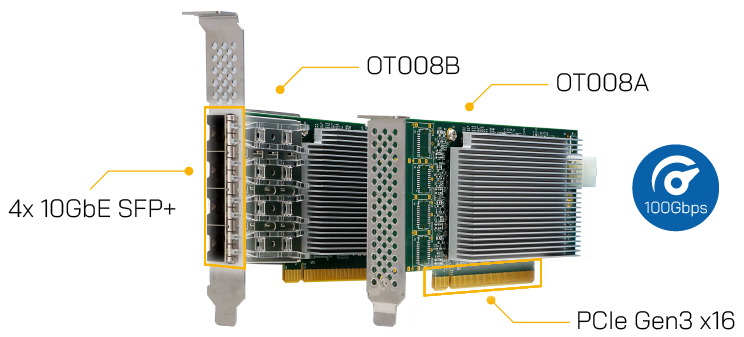

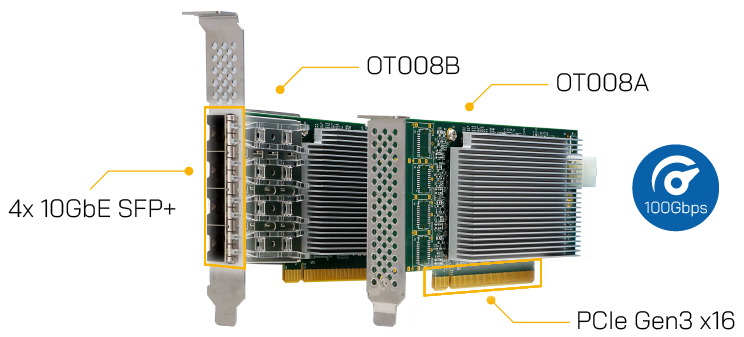

To enhance data security, AEWIN launches OT008 crypto accelerator that provides Intel QuickAssist Technology to secure data transport and protection across server, storage, network, and VM migration. OT008 can accelerate important wireless hashing and ciphering algorithms such as AES, Snow 3G, and ZUC utilized in 5G networks.

As the enterprises and organizations across the globe study more economically feasible ways to construct their own private 5G networks, there is a growing need for off the shelf hardware to provide the critical cryptographic acceleration to reduce the overall hardware expenditure, and free up the costly CPU for other tasks. AEWIN’s OT008 is designed to excel at this application, with on-board cryptographic engine as well as 4x 10Gbps SFP+ ethernet connections. This all-in-one package provides the security and additional networking ports.

Beyond 5G, OT008 is also well suited for data centers and enterprise servers. Intel QAT tool kit supports a wide range of common cryptographic algorithms that commonly used in network communication, data storage, and signal processing. With the hashing algorithms, it can detect changes in the stored data or files to prevent unauthorized changes or detect errors in transmission. With on-board 4x 10Gbps network connections, it also frees up valuable PCIe slots inside a server for other tasks or add-on cards.

This new QAT adaptor is a standard half-height, half-length PCIe card with PCIe Gen 3 x16 connection. Powered by Intel’s C627 PCH, it provides up to 100Gbps throughput in AES128 and up to 472k ops/sec in RSA performance. It can be installed in AEWIN’s data center oriented servers, as well as network computing platforms through a PCIe conversion kit. Please contact AEWIN sales representatives and see how to integrate QAT into your AEWIN servers.

Intel® C627 PCH

4x 10GbE SFP+ Ports (optional)

Intel® QAT for Crypto and Compression, Acceleration up to 100Gbps

Read More

這篇文章 OT008 Hybrid Network Cryptography Accelerator Card 最早出現於 AEWIN。

]]>這篇文章 AEWIN partners with UNISEM to launch Edge AI solutions 最早出現於 AEWIN。

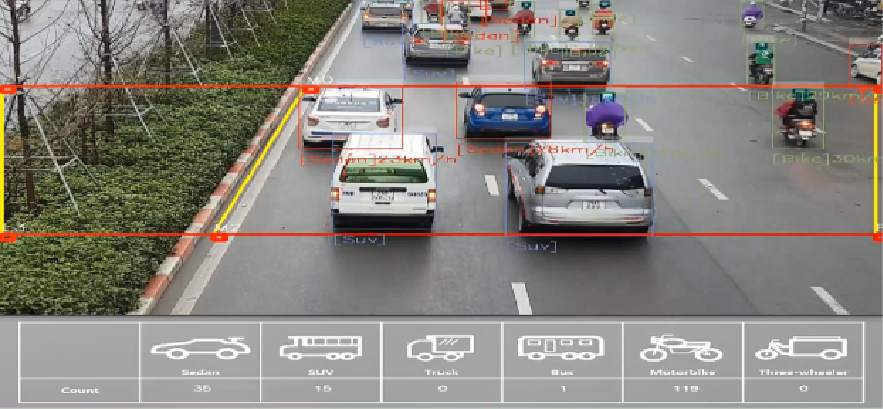

]]>UniTraffic is a piece of the future smart city. The traffic management technology utilizes deep learning-based image processing technology and multi-object detection that counts and classifies on-road objects such as pedestrians, automobiles, motorcycles, and trucks. At the same time, it can detect the license plate number of vehicles that violated traffic regulations. It has 99% accuracy for multiple object recognition in real-time. UniTraffic solution is useful for city planners by providing statistics and traffic flow patterns to plan future infrastructural development and upgrades. Likewise, for city administration and police departments, UniTraffic can minimize the time used for administering tickets and reduce personnel costs.

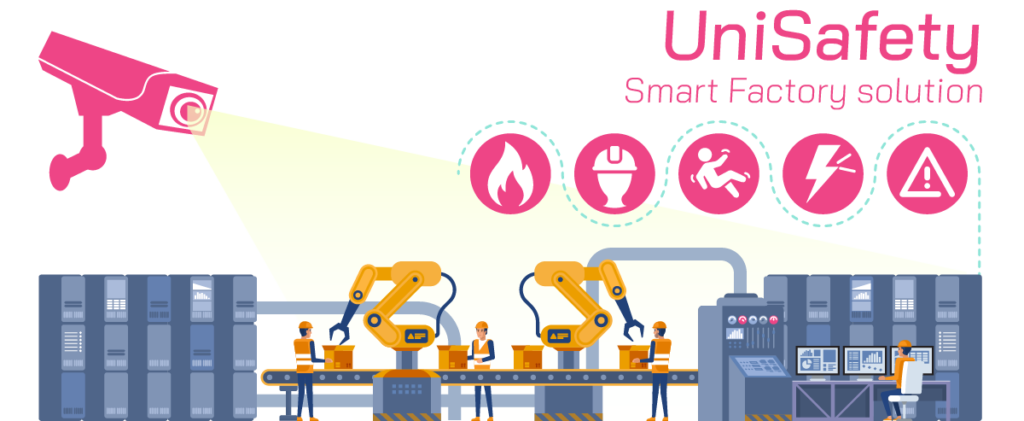

UniSafety is based on video analytics technology enhanced with deep learning that allows detection of various dangerous situations, such as fire, falling materials, abnormal process, crush injury, non-wearing of safety equipment, intrusion, etc., that can occur during the production process. In the case of an accident or unusual situation, the system informs the safety supervisors via web-dashboard notification, text message, and loudspeaker. It also offers motion detection for detecting unauthorized access or intrusions. This can allow a more open working space without using barriers to keep out intruders.

“UNISEM’s vision aligned closely with AEWIN’s hardware development roadmap. The continued relationship allowed us the integrate designs optimal for UNISEM’s specific hardware requirements,” Charles Lin, CEO at AEWIN “Today’s announcement is the result of the past few years of cooperation. Through repeated testing and benchmarking, AEWIN has thoroughly investigated the performance characteristics of the UNISEM software suite that allows us to provide a workload-optimized total solution.”

“AEWIN has been exceedingly helpful in our quest to find the right hardware to host our solutions. AEWIN has not only provided insights in hardware design and selection, as well as taking our software in-house to provide customized firmware specific for our workloads,” said Jung Boo Eun, the Managing Director from UNISEM. “AEWIN hardware greatly outperforms other off-the-shelf hardware. We’re excited to deepen our partnership with AEWIN to provide workload-optimized solutions that will help you change the world.”

Learn More:

https://www.aewin.cn

https://www.UNISEMiot.com/

https://unisemiot.com/content/unitraffic-introduction/

https://unisemiot.com/content/unisafety-introduction/

UniTraffic :

https://www.aewin.cn/application/ai-deep-learning-traffic-management-solution-unitraffic/

UniSafety :

https://www.aewin.cn/application/ai-based-computer-vision-solution-unisafety/

這篇文章 AEWIN partners with UNISEM to launch Edge AI solutions 最早出現於 AEWIN。

]]>這篇文章 AEWIN和D8AI合作推出邊緣人工智慧解決方案 最早出現於 AEWIN。

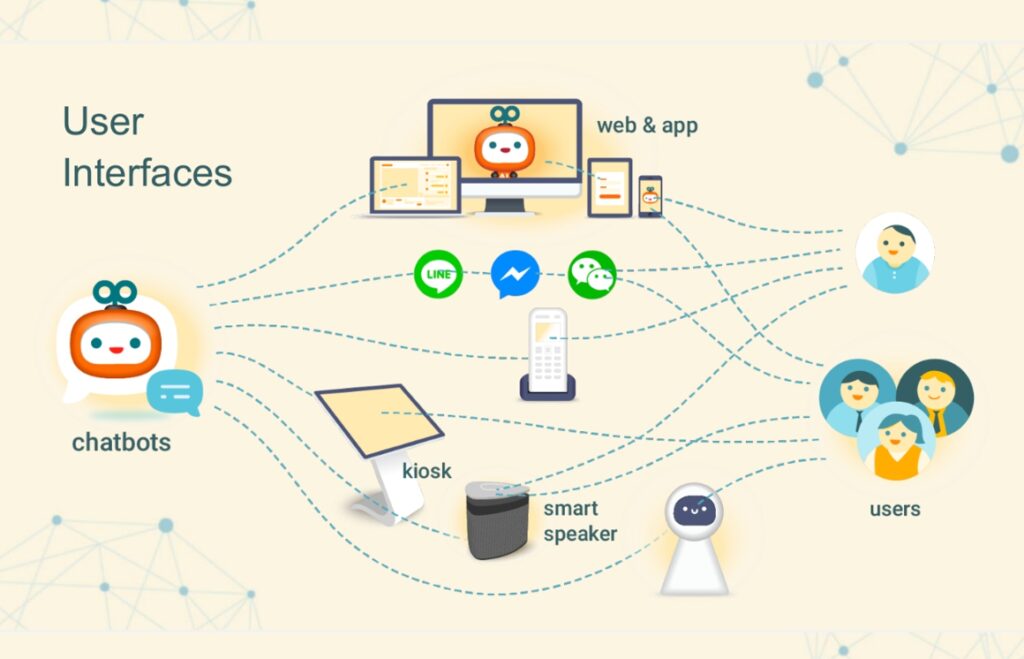

]]>D8AI Chatbot solution is designed as enterprise-grade customer care AI that can provide relevant and timely assistance or response. It can integrate into many existing messaging and social media platforms, with a single unified management backend. This allows easy management and monitoring of user interactions.

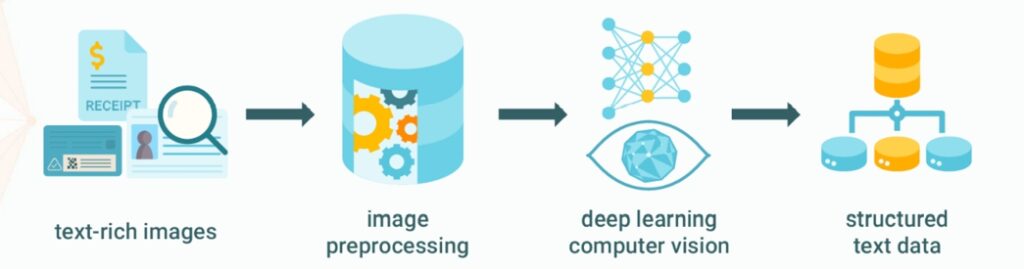

NLP Document Recognizer utilizes a proprietary AI enabled data extraction technology which can transform multiple optical and text data types simultaneously. These formats include Chinese, English, barcode, QR code, and other digital data. It can help organizations speed up digitization of paper documents for processing and storage in the database. It utilizes deep learning and computer vision in unison to find structure in data, which can be used for predictive analytics.

Through this partnership, AEWIN has gained profound insights in AI technology and allowed us to customize BIOS and firmware for optimized results, as well as carefully chosen hardware specifications tuned for exacting needs.

“D8AI solutions offer an attractive option for enterprises and organizations to leverage the latest technologies to augment their internal processes. AEWIN’s computing platforms are quintessential part of the equation,” said Charles Lin, CEO at AEWIN. “With a balance of hardware and firmware optimization, we are able to achieve substantial performance gains. We are proud to present this solution to the market to exemplify what can be accomplished with tight cooperation between hardware and software partners.”

“AEWIN has been an indispensable partner. Their hardware and BIOS tuning expertise give us the computing performance we sought. AEWIN’s hardware provides significant advantages over other servers on the market,” said Dr. Richard Sheng, President and CTO of D8AI. “Through our partnership, we have gained a reliable partner that can provide insights to hardware designs and architectures that directly feed back into our software architecture to take advantage of the latest hardware innovations.”

Learn More:

https://www.aewin.cn/

https://www.d8ai.com/

Chatbot Solutions : https://www.aewin.cn/application/aewins-d8ai-chatbot-solution/

NLP Document Recognizer solution : https://www.aewin.cn/application/aewins-d8ai-nlp-document-recognizer-solution/

這篇文章 AEWIN和D8AI合作推出邊緣人工智慧解決方案 最早出現於 AEWIN。

]]>這篇文章 高效能邊緣運算處理平台 SCB-1937 最早出現於 AEWIN。

]]>EPYC 7003 series processors features the Zen 3 architecture that brings a host of architectural improvements that brings huge performance and efficiency gains. The chiplets and IO die design has been further refined and optimized. In addition, it also features tweaks under the hood for nearly 20% instructions per clock gains. This allows the new EPYC processors to be wide and fast. It still features up to 128 lanes of PCIe Gen4 per CPU for attaching the fastest network cards or accelerators.

SCB-1937 supports the full range of AEWIN Network Expansion Modules, such as 1/10/25/40/100 Gigabit fiber, copper with BYPASS function or not. The maximum capable Ethernet port is up to 64x 1GbE, or up to 8x 100GbE ports. This combination makes the SCB-1937 the powerhouse for any network packet processing workloads with countless of port configurations to suit deployment in any situation.

AEWIN’s network computing platform is also ideal for network edge applications, such as MEC, Edge AI, and vRAN applications. The prestigious amount of PCIe lanes allows many accelerators to be installed. For MEC and Edge AI applications, GPU accelerators can be added to provide massive boost to inference performance. SCB-1937 is a flexible network computing platform ready for your applications. Speak to AEWIN’s friendly sales team to see how to configure the SCB-1937 to be the foundation of your next application.

DIGITIMES, a publication specialized in global IT and semiconductor sector, has reported on AEWIN and the SCB-1937. Hop on over to DIGITIMES to read the article, which details how SCB-1937 has been used and its user applications.

https://www.digitimes.com/news/a20210524PR200.html?mod=3&q=AMD+Milan+CPU&chid=9

|

SCB-1937 Network Appliance | Edge computing server ●Support dual AMD® EPYC  7003/7002 (Milan/Rome) processors 7003/7002 (Milan/Rome) processors●16x DDR4 3200MHz memory ●8x PCIe x8 for Network Expansion Module ●2x 2.5” HDD/SSD, 2x M.2 2280 & 1x m-SATA ●Support OT004A Trusted Secure Boot modules |

這篇文章 高效能邊緣運算處理平台 SCB-1937 最早出現於 AEWIN。

]]>這篇文章 AEWIN發布最新的QAT accelerators – OT008 最早出現於 AEWIN。

]]>

Intel® C627 PCH

4x 10GbE SFP+ Ports (optional)

Intel® QAT for Crypto and Compression, Acceleration up to 100Gbps

Read More

這篇文章 AEWIN發布最新的QAT accelerators – OT008 最早出現於 AEWIN。

]]>這篇文章 Intel E810 網通解決方案 最早出現於 AEWIN。

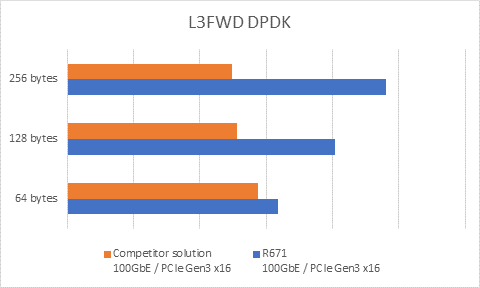

]]>Figure 1 – 100GbE Network card L3FWD Benchmark vs Competitor

| R671 |  |

– 1x Intel® E810 – 1x or 2x 100GbE QSFP28 Ports – Support ADQ, DDP, DPDK, RDMA, SR-IOV, VMDq |

| R671 |  |

– 1x Intel® E810-CAM1 – 4x 25GbE SFP28 Ports – Support ADQ, DDP, DPDK, RDMA, SR-IOV, VMDq |

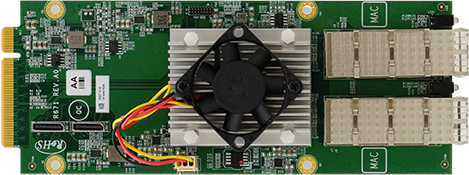

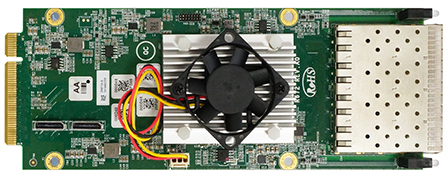

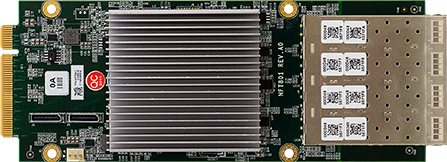

| NFT801 |  |

– 1x Intel® E810-CAM2 – 8x 10GbE SFP+ Ports – Support VMDq, SR-IOV, DDP, DPDK |

這篇文章 Intel E810 網通解決方案 最早出現於 AEWIN。

]]>這篇文章 其陽推出最新Intel第三代Xeon Scalable處理器 (Ice Lake-SP)邊緣運算伺服器 最早出現於 AEWIN。

]]>

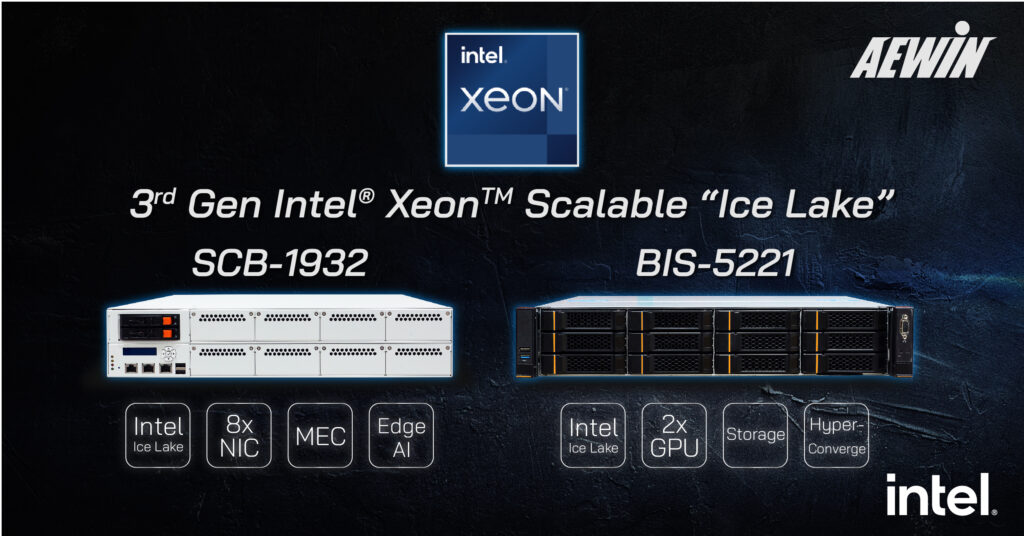

Intel launches the rest of the 3rd generation Xeon Scalable Processors, based on the advanced Ice Lake-SP architecture. AEWIN is proud to launches first wave of systems to support these processors: SCB-1932 and BIS-5221.

The SCB-1932 is a 2U, 2 socket network computing platform and is highly configurable via PCIe accessories and Network Expansion Modules. With up to 8x PCIe Gen 4 Expansion module slots, it is a versatile system that can satisfy many use cases within a single system. Supporting multiple GPU accelerators and 100GbE networking, this platform is ideal for NFVI and MEC/vRAN applications. The short 600mm chassis depth allows fitment in the diverse location edge servers may be deployed.

BIS-5221 is a 2U, 2 socket Ice Lake-SP based rackmount server. This is a hyper converged server platform that can empower all your applications. With 6x FHHL and 2x HHHL slots, the SCB-5221 offers great expandability. Total of 2x dual-width GPUs are supported to accelerate AI inferencing and video decoding/analytics workloads. In addition, it also offers 2x OCP3.0 SFF slots, each with PCIe Gen4 x16 bandwidth for the increasingly popular OCP3.0 based network cards. Besides, the newest OCP3.0 spec offers component replacement without opening the chassis benefit. The prestigious amount of PCIe lanes offered by the new Ice Lake-SP is the magic behind this expandability. Vast amount of memory is supported with a total of 32x memory slots.

Ice Lake-SP has been further refined over the previous generation with Intel Optane Persistent Memory 200 series support (code name Barlow Pass). Performance improvements are helped by the increased core count to 40cores per socket, and up to 8x channel 3200MHz memory. Platform security has also been enhanced. In addition to SGX already introduced in previous platforms, TME (Total Memory Encryption, Crypto Acceleration, and Intel PFR (Platform Firmware Resilience) technologies are available to help harden the platform against malicious actions. Ice Lake-SP is a giant leap in every metric compare to previous platforms. Have a chat with our friendly sales representatives and discover how Ice Lake-SP can take your applications to the next level.

|

|

|

| BIS-5221

|

|

這篇文章 其陽推出最新Intel第三代Xeon Scalable處理器 (Ice Lake-SP)邊緣運算伺服器 最早出現於 AEWIN。

]]>